Computer & electronics hardware

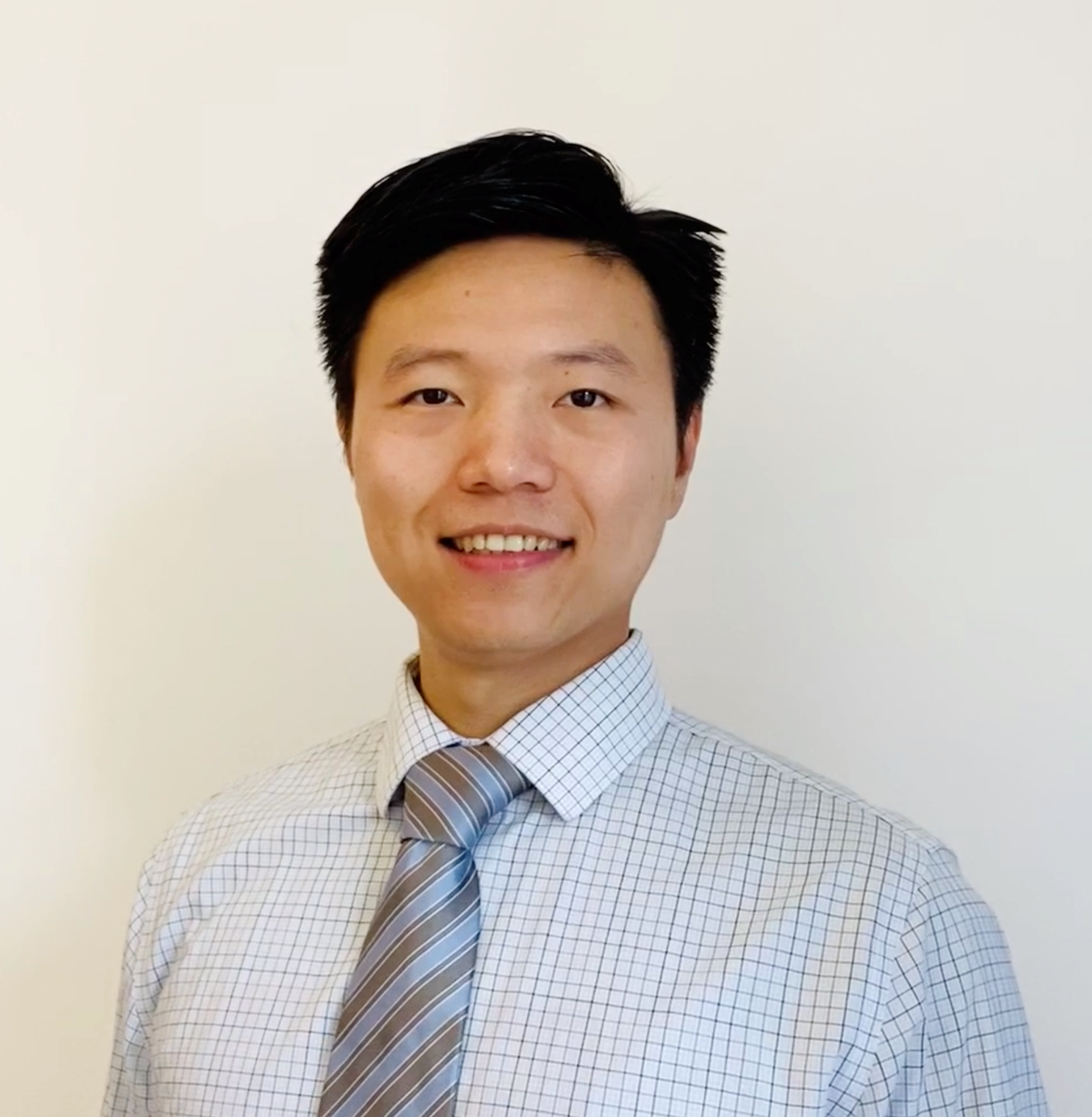

Bolei Zhou

Making AI models more understandable and trustworthy to humans

Bolei Zhou

Japan

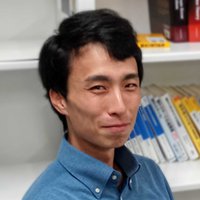

Yoichi Ochiai

A visionary for the advent of "Digital Nature". His creative work as a media artist is also highly regarded.

China

Tianchi Li

Developed programming tools to help children learn programming better

Japan

Kosuke Mitarai

Built the world's first machine learning algorithm for quantum computers and co-founded a software venture for quantum computers.

Asia Pacific

Katherine A. Kim

Power Electronics to Maximize Solar Photovoltaic Power for Emerging Applications